Understanding Google Indexation

Before your website can appear in Google search results, it must first be crawled and indexed. Crawling is the process where Google's bots discover your pages by following links across the web. Indexing is the process where Google analyzes the content of each crawled page and stores it in its massive database. Only indexed pages can appear in search results, which means that if Google has not indexed a page, it is effectively invisible to anyone searching on Google.

Many website owners assume that publishing a page automatically means Google will find and index it. In reality, indexation is not guaranteed. New websites, pages with limited internal links, pages blocked by technical configurations, and content that Google considers low quality may never make it into the index. This is why regularly checking your indexation status is a critical part of any SEO strategy.

How Google Indexation Works

The indexation process follows a specific sequence that every SEO professional should understand:

- Discovery: Googlebot discovers your URL through links from other pages, your XML sitemap, or a direct submission via Google Search Console

- Crawling: Googlebot visits the URL and downloads the page content, including HTML, CSS, JavaScript, images, and other resources needed to render the page

- Rendering: Google renders the page as a browser would, executing JavaScript and building the complete DOM to understand the fully loaded content

- Indexing: Google analyzes the rendered content, extracts key information like text, metadata, and structured data, and adds the page to its index with associated ranking signals

- Ranking: When a user performs a search, Google retrieves relevant indexed pages and ranks them based on hundreds of factors including relevance, authority, and user experience

Common Reasons Pages Are Not Indexed

If you discover that important pages are missing from Google's index, one or more of these factors may be responsible:

- Robots meta tag: A noindex directive in your page's robots meta tag explicitly tells Google not to index that page. This is sometimes added intentionally but can also be left in place accidentally after a site migration or staging environment cleanup

- Robots.txt blocking: If your robots.txt file disallows crawling of a URL or directory, Google cannot access and index those pages. Check your robots.txt file to ensure important pages are not inadvertently blocked

- Canonical tag issues: A canonical tag pointing to a different URL tells Google that the current page is a duplicate and should not be indexed separately. Incorrect canonical tags can prevent important pages from being indexed

- Crawl budget limitations: Large websites with millions of pages may find that Google does not crawl all pages within a reasonable timeframe. Optimizing your crawl budget by removing low-value pages and improving site architecture helps ensure important pages get crawled

- Low quality content: Google may choose not to index pages it considers thin, duplicate, or lacking unique value. Pages with very little content, automatically generated text, or content that closely duplicates other pages on your site are at risk

- Server errors: If Googlebot encounters 5xx server errors when trying to crawl your pages, it will retry but may eventually give up. Persistent server issues can lead to pages being dropped from the index

How to Check Your Indexation Status

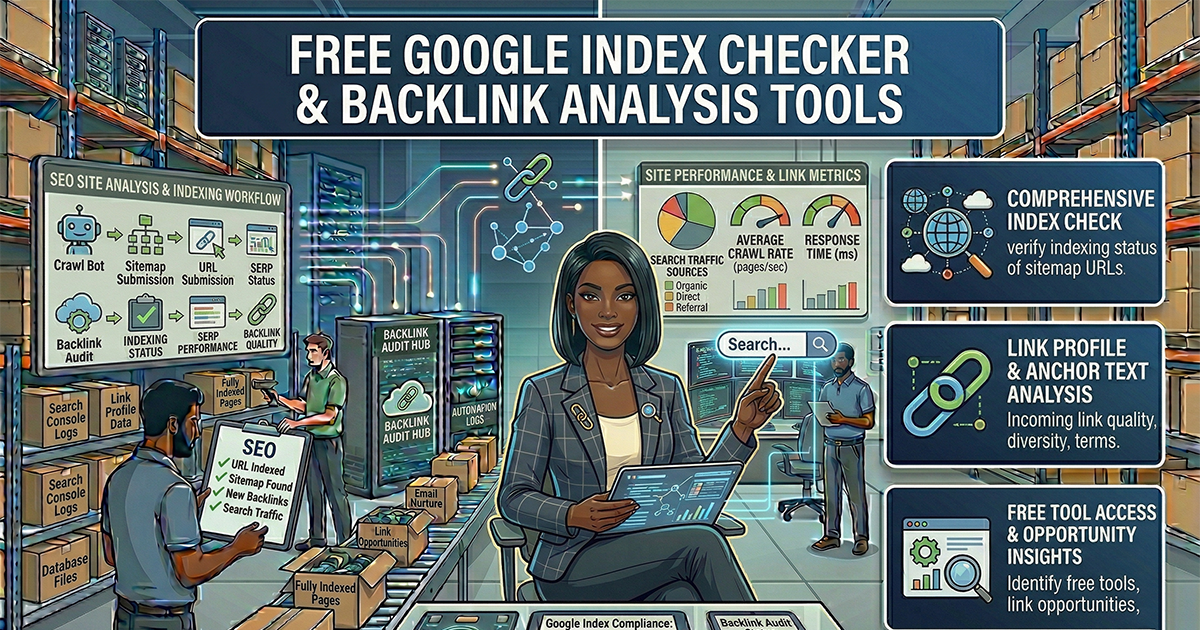

Our Google Index Checker tool allows you to quickly verify whether specific pages on your website have been indexed by Google. Simply enter one or more URLs, and the tool will check each one and report its indexation status. This is faster and more convenient than manually searching for each URL in Google.

For a comprehensive view of your site's indexation, you should also use Google Search Console's Coverage report, which shows all indexed pages, pages with errors, pages excluded from indexing, and the specific reasons for each status. Our tool complements Search Console by providing quick spot checks without needing to log into any account.

Backlink Analysis: Understanding Your Link Profile

Backlinks, also known as inbound links, are links from other websites that point to your pages. They have been one of the most important ranking factors in Google's algorithm since the company's founding, and they remain critical today. Each backlink acts as a vote of confidence from one website to another, telling search engines that your content is valuable enough for someone else to reference.

Why Backlinks Matter for SEO

The quantity and quality of backlinks pointing to your website directly influence your search rankings in several ways:

- Domain authority: Websites with strong backlink profiles are perceived by search engines as more authoritative and trustworthy. This authority is sometimes measured using third-party metrics like Domain Rating or Domain Authority, which attempt to quantify the strength of a site's link profile

- Page authority: Individual pages that receive direct backlinks gain ranking power for the keywords they target. A single high-quality backlink from an authoritative source can be worth more than hundreds of links from low-quality sites

- Referral traffic: Beyond SEO value, backlinks drive direct referral traffic when users click on links to visit your site. Links from high-traffic websites can send significant numbers of visitors

- Faster indexation: Pages that receive backlinks from frequently crawled websites are discovered and indexed by Google faster than pages with no external links

- Trust signals: Links from trusted domains in your industry signal to search engines that your website is a legitimate and reliable source of information

Types of Backlinks

Not all backlinks are equal. Understanding the different types helps you evaluate the quality of your link profile and identify opportunities for improvement:

- Editorial links: The most valuable type. These are links that other websites place in their content because they genuinely find your content useful or reference-worthy. They occur naturally and carry the most weight with search engines

- Guest post links: Links earned by contributing high-quality content to other websites in your industry. When done authentically, these provide legitimate value to readers and build authority

- Resource page links: Many websites maintain curated lists of useful resources in their field. Getting your content listed on relevant resource pages provides both authority and targeted referral traffic

- Directory links: Links from industry-specific directories and business listings. While general directories carry less weight than they once did, niche industry directories still provide value

- Social profile links: Links from social media profiles and business pages. While typically marked as nofollow, they contribute to your overall online presence and can drive referral traffic

- Forum and community links: Links from relevant discussions in forums and online communities. These should be genuine contributions to conversations, not spam

Analyzing Your Backlink Profile

Our Backlink Checker tool provides a comprehensive analysis of the backlinks pointing to any domain or specific URL. The analysis includes the total number of backlinks discovered, the number of unique referring domains which is often more important than raw link count, the distribution of dofollow versus nofollow links, anchor text analysis showing the most common link text used, and identification of your most authoritative backlink sources.

What to Look For in Your Backlink Analysis

When reviewing your backlink profile, pay attention to these key indicators:

- Referring domain diversity: A healthy backlink profile includes links from many different domains rather than many links from just a few sites. High diversity signals natural link building

- Link quality over quantity: Ten links from authoritative, relevant websites in your industry are worth more than a thousand links from random low-quality sites

- Anchor text distribution: Natural backlink profiles have varied anchor text including branded terms, naked URLs, generic phrases like "click here," and some keyword-rich anchors. An unnatural concentration of exact-match keyword anchors can trigger penalties

- Link growth patterns: Healthy link profiles grow steadily over time. Sudden spikes in backlinks can appear manipulative and trigger algorithmic reviews

- Toxic links: Links from spammy, irrelevant, or penalized websites can actually harm your rankings. If you identify toxic links in your profile, consider using Google's disavow tool to tell Google to ignore them

Focus on earning backlinks through quality content rather than building them through artificial means. Create content that people genuinely want to reference, share, and link to. This is the most sustainable and effective link building strategy.

Combining Index Checking and Backlink Analysis

These two tools work together to give you a complete picture of your site's search engine presence. Use the Google Index Checker to verify that your important pages are actually in Google's index and visible to searchers. Then use the Backlink Checker to understand the link authority flowing to those pages and identify opportunities to strengthen your link profile.

Pages that are indexed but have few backlinks may struggle to rank competitively. Pages with strong backlinks that are not indexed represent wasted link equity. By monitoring both metrics, you can ensure that your SEO efforts are working in harmony, with every important page indexed and supported by a strong backlink profile. Both tools are completely free and available for immediate use.